Abstract

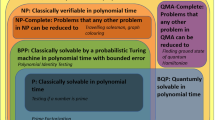

In a recent breakthrough paper Braverman et al. (in: STOC’13, pp 151–160, 2013) developed a local characterization for the zero-error information complexity in the two-party model, and used it to compute the exact internal and external information complexity of the 2-bit AND function. In this article, we extend their results on AND function to the multi-party number-in-hand model by proving that the generalization of their protocol has optimal internal and external information cost for certain natural distributions. Our proof has new components, and in particular, it fixes a minor gap in the proof of Braverman et al.

Similar content being viewed by others

References

Bar-Yossef, Z., Jayram, T.S., Kumar, R., Sivakumar, D.: An information statistics approach to data stream and communication complexity. J. Comput. Syst. Sci. 68(4), 702–732 (2004)

Braverman, M., Garg, A., Pankratov, D., Weinstein, O.: From information to exact communication (extended abstract). In: STOC’13, pp. 151–160. ACM (2013)

Braverman, M., Garg, A., Pankratov, D., Weinstein, O.: Information lower bounds via self-reducibility. Theory Comput. Syst. 59(2), 377–396 (2016)

Braverman, M., Rao, A.: Information equals amortized communication. IEEE Trans. Inf. Theory 60(10), 6058–6069 (2014)

Chakrabarti, A., Khot, S., Sun, X.: Near-optimal lower bounds on the multi-party communication complexity of set disjointness. In: Proceedings of 18th IEEE Annual Conference on Computational Complexity, 2003., pp. 107–117. IEEE (2003)

Cover, T.M., Thomas, J.A.: Elements of Information Theory. Wiley, Hoboken (2012)

Dagan, Y., Filmus, Y., Hatami, H., Li, Y.: Trading information complexity for error. Theor. Comput. 14(6), 1–73 (2018)

Gronemeier, A.: Nof-multiparty information complexity bounds for pointer jumping. In: International Symposium on Mathematical Foundations of Computer Science, pp. 459–470. Springer (2006)

Gronemeier, A.: Asymptotically optimal lower bounds on the nih-multi-party information. arXiv preprint arXiv:0902.1609 (2009)

Kushilevitz, E., Nisan, N.: Communication Complexity. Cambridge University Press, Cambridge (1997)

Ma, N., Ishwar, P.: Some results on distributed source coding for interactive function computation. IEEE Trans. Inf. Theory 57(9), 6180–6195 (2011)

Ma, N., Ishwar, P.: The infinite-message limit of two-terminal interactive source coding. IEEE Trans. Inf. Theory 59(7), 4071–4094 (2013)

Pankratov, D.: Communication complexity and information complexity. Ph.D. thesis, The University of Chicago (2015)

Razborov, A.A.: On the distributional complexity of disjointness. Theor. Comput. Sci. 106(2), 385–390 (1992)

Yao, A.C.C.: Some complexity questions related to distributive computing(preliminary report). STOC’79, pp. 209–213. ACM (1979)

Funding

Yuval Filmus is supported by Israel Science Foundation (Grant No. 2022103), Hamed Hatami is supported by an NSERC grant.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix

Appendix

The computational results of the two-party \({{\mathrm{AND}}}_2\) in [2, Section 7.7] show the concavity term (the one that we want to verify its non-negativity) can be of order \(\varepsilon ^2\). We see in Sect. 4.6 that our computation is of order \(\varepsilon ^3\). This is because we choose to focus our computation, as allowed by a series of reductions, on the time interval \([-\gamma _0, \gamma _1]\) only. Claim 1 below shows an order \(\varepsilon ^2\) term can appear too if the whole range is considered.

Claim 1

Suppose \(s \geqslant 2\) and \(L = |t_{s-1}| > 0\). If \(\gamma _0 \leqslant L/2\), then

and

Proof

Consider external case first. Let \(\mu '\) be defined as

Then \(\mu ^0= \mu + \varepsilon \mu '\) and \(\mu ^1= \mu - \varepsilon \mu '\), hence \(f^0(\pi ^m_t)= f(\pi ^m_t)+ \varepsilon \sum \mu '_x f_x(\pi ^m_t)\) and \(f^1(\pi ^m_t)= f(\pi ^m_t)- \varepsilon \sum \mu '_x f_x(\pi ^m_t)\). Note that \(f(\pi ^m_t) = 0\) (in our case this happens when \(m \geqslant s\)) implies \(\mathrm {concav}_\mu ^{ext}(t) = 0\). On the other hand, when \(f(\pi ^m_t) \ne 0\) (i.e., \(1 \leqslant m \leqslant s-1\)), using Taylor expansion at the point \(f(\pi ^m_t)\) for functions \(\phi (f^0(\pi ^m_t))\) and \(\phi (f^1(\pi ^m_t))\), and expansion at \(\mu _x f_x(\pi ^m_t)\) for functions \(\phi (\mu ^0_x f^0_x(\pi ^m_t))\) and \(\phi (\mu ^1_x f^1_x(\pi ^m_t))\), we obtain (note here we won’t have \(\varepsilon ^3\))

By Statement 4 one can assume \(\mu _{e_s} = \cdots = \mu _{e_k}\) and \(\mu _{e_1} = \cdots = \mu _{e_{s-1}} = \mu _{e_s} e^{-L}\), thus \(\mu _{\mathbf {0}} = 1 - (k-s+1) \mu _{e_s} - (s-1) \mu _{e_s} e^{-L}\). Then \(t_{s-1} = 0\) and \(t_s = L\). As \(\gamma _0 \leqslant L/2\) implies \(t_s - \gamma _0 = L - \gamma _0 \geqslant L/2\), and (66) says the integrand is non-negative, hence a lower bound is given by the integration of (66) in the range [0, L / 2]. For \(t \in [0, L/2]\) and \(1 \leqslant m \leqslant s-1\), we have \(f_{\mathbf {0}} (\pi _t^m) = f_{e_s} (\pi _t^m) = \cdots =f_{e_k} (\pi _t^m) = e^{-(s-1)t}\), and \(f_{e_1} (\pi _t^m) = \cdots =f_{e_{s-1}} (\pi _t^m) = e^{-(s-2)t}\), thus \(f(\pi _t^m) = (1 - (s-1) \mu _{e_s} e^{-L} + (s-2) \mu _{e_s} {{\mathrm{e}}}^{-L} e^t) e^{-(s-1)t} \leqslant (s-1)e^{-(s-1)t}\). Hence,

Integrating in the range [0, L / 2] with respect to t gives the desired bound (64).

Similarly, in the internal case one has

Hence we get the bound (65) after integration. \(\square \)

Rights and permissions

About this article

Cite this article

Filmus, Y., Hatami, H., Li, Y. et al. Information Complexity of the AND Function in the Two-Party and Multi-party Settings. Algorithmica 81, 4200–4237 (2019). https://doi.org/10.1007/s00453-018-0484-8

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00453-018-0484-8