Abstract

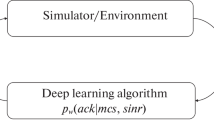

Two reinforcement learning neural network architectures which enhance the performance of a soft-decision Viterbi decoder used for forward error-correction in a digital communication system have been investigated and compared. Each reinforcement learning neural network is designed to work as a co-processor to a demodulator dynamically adapting the soft quantization thresholds toward optimal settings in varying noise environments. The soft quantization thresholds of the demodulator are dynamically adjusted according to the previous performance of the Viterbi decoder, with updates occurring in fixed intervals (every 200 decoded bits out of the Viterbi decoder.) To facilitate implementaiton in digital hardware, each weight of the neural network and related parameters are specified as binary numbers. Computer simulation results demonstrate that, on average, the performance of a Viterbi decoder on an AWGN channel with nonuniformly-spaced soft decision thresholds dynamically adjusted by these neural networks is better than the performance of a Viterbi decoder with uniformly-spaced thresholds. This approach may be used for a variety of other digital communication applications such as channel estimation, adaptive equalization, and signal acquisition.

Similar content being viewed by others

References

S. Lin and D.J. Costello, Jr.,Error Control Coding: Fundamentals and Applications, Englewood Cliffs, NJ: Prentice-Hall, 1983.

J.A. Heller and I.M. Jacobs, “Viterbi Decoding for Satellite and Space Communication,”IEEE Trans. Commun. Tech., vol. COM-19, 1971, pp. 835–848.

Y. Yasuda, Y. Hirata, and A. Ogawa, “Optimum Soft Decision for Viterbi Decoding,”Proceedings of the 5th Int. Conf. on Digital Satellite Communications, 1981, pp. 251–258.

J.P. Odenwalder, “Optimal Decoding of Convolutional Codes,” Ph.D. Dissertation, University of California, Los Angeles, 1970.

J.L. Massey, “Coding and Modulation in Digital Communication,”Proc. Int. Zurich Seminar on Digital Communications, 1974, pp. E2(l)–E2(4).

L.N. Lee, “On Optimal Soft-Decision Demodulation,”IEEE Trans. Inform. Theory, vol. IT-22, 1976, pp. 437–444.

L. Chin and D.P. Mital, “Application of Neural Networks in Robotic Control,”IEEE Int. Symp. Circuits and Systems, 1991, pp. 2522–2525.

H. Date, M. Seki, and T. Hayashi, “LSI Module Placement Methods Using Neural Computation Networks,”Int. Joint Conf. Neural Networks, 1990, vol. III, pp. 831–836.

J.M. Lambert and R. Hecht-Nielsen, “Application of Feedforward and Recurrent Neural Networks to Chemical Plant Predictive Modeling,”Int. Joint Conf. Neural Networks, 1991.

P.J. Werbos, “Backpropagation Through Time: What It Does and How to Do It,”Proceedings of the IEEE, vol. 78, Oct. 1990, pp. 1550–1560.

P.J. Werbos, “Consistency of HDP Applied to a Simple Reinforcement Learning Problem, ”Neural Networks, vol. 3, 1990, pp. 179–189.

A.G. Barto, R.S. Sutton, and C. Anderson, “Neuron-like adaptive elements that can solve difficult learning control problems,”IEEE Trans. on Systems, Man, and Cybernetics, vol. SMC-13, 1983, pp. 834–846.

P.J. Werbos, “Advanced forecasting methods for global crisis warning and models of intelligence,”General Systems yearbook, Appendix B., 1977.

P.J. Werbos, “A Menu of designs for reinforcement learning over time,” in T. Miller, R. Sutton, and P.J. Werbos, Eds.,Neural Networks for Control, Cambridge, MA: MIT Press, 1990, pp. 67–95.

J.G. Proakis,Digital Communications, New York: McGraw-Hill, 1989.

R.S. Sutton, “Learning to Predict by the Methods of Temporal Difference,”Machine Learning, vol. 3, 1988, pp. 9–44.

Author information

Authors and Affiliations

Rights and permissions

About this article

Cite this article

Wu, Yj., Alston, M.D. & Chau, P.M. Dynamic adaptation of quantization thresholds for soft-decision viterbi decoding with a reinforcement learning neural network. J VLSI Sign Process Syst Sign Image Video Technol 6, 77–84 (1993). https://doi.org/10.1007/BF01581961

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1007/BF01581961