Abstract

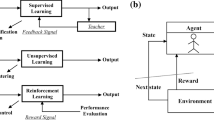

This paper presents a reinforcement connectionist system which finds and learns the suitable situation-action rules so as to generate feasible paths for a point robot in a 2D environment with circular obstacles. The basic reinforcement algorithm is extended with a strategy for discovering stable solution paths. Equipped with this strategy and a powerful codification scheme, the path-finder (i) learns quickly, (ii) deals with continuous-valued inputs and outputs, (iii) exhibits good noise-tolerance and generalization capabilities, (iv) copes with dynamic environments, and (v) solves an instance of the path finding problem with strong performance demands.

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

References

Agre, P.E., & Chapman, D. (1987). Pengi: An implementation of a theory of activity.Proceedings of the Seventh AAAI Conference (pp. 268–272).

Anderson, C.W. (1986).Learning and problem solving with multilayer connectionist systems. Ph.D. Thesis, Dept. of Computer and Information Science, University of Massachusetts, Amherst.

Anderson, C.W. (1987). Strategy learning with multilayer connectionist representations.Proceedings of the Fourth International Workshop on Machine Learning (pp. 103–114).

Arkins, R.C. (1987). Motor schema based navigation for a mobile robot: An approach to programming by behavior.Proceedings of the IEEE International Conference on Robotics and Automation (pp. 264–271).

Barto, A.G. (1985). Learning by statistical cooperation of self-interested neuron-like computing elements.Human Neurobiology, 4, 229–256.

Barto, A.G., & Anandan, P. (1985). Pattern-recognizing stochastic learning automata.IEEE Transactions on Systems, Man, and Cybernetics, 15, 360–374.

Barto, A.G., Sutton, R.S., & Anderson, C.W. (1983). Neuronlike elements that can solve difficult learning control problems.IEEE Transactions on Systems, Man, and Cybernetics, 13, 835–846.

Barto, A.G., Sutton, R.S., & Brouwer, P.S. (1981). Associative search network: A reinforcement learning associative memory.Biological Cybernetics, 40, 201–211.

Barto, A.G., Sutton, R.S. & Watkins, C.J.C.H. (1989).Learning and sequential decision making (Technical Report COINS-89-95). University of Massachusetts, Amherst, MA: Dept. of Computer and Information Science.

Blythe, J., & Mitchell, T.M. (1989). On becoming reactive.Proceedings of the Sixth International Workshop on Machine Learning (pp. 255–259).

Brady, M., Hollerbach, J.M., Johnson, T.L., Lozano-Pérez, T., & Mason, M.T., (Eds.) (1982).Robot motion: Planning and control. Cambridge, MA: MIT Press.

Brooks, R.A. (1986). A robust layered control system for a mobile robot.IEEE Journal of Robotics and Automation, 2, 14–23.

Canny, J.F. (1988).The complexiity of robot motion planning. Cambridge, MA: MIT Press.

Chapman, D., & Kaelbling, L.P. (1990).Learning from delayed reinforcement in a complex domain (Technical Report 90-11). Palo Alto, CA: Teleos Research.

Donald, B.R. (1987). A search algorithm for robot motion planning with six degrees of freedom.Artificial Intelligence, 31, 295–353.

Graf, D.H., & LaLonde, W.R. (1988). A neural controller for collision-free movement of general robot manipulators.Proceedings of the IEEE Second International Conference on Neural Networks, Vol 1 (pp. 77–84).

Gullapalli, V. (1988).A stochastic algorithm for learning real-valued functions via reinforcement feedback (Technical Report COINS-88-91). University of Massachusetts, Amherst, MA: Dept. of Computer and Information Science.

Ilari, J., & Torras, C. (1990). 2D path planning: A configuration space heuristic approach.The International Journal of Robotics Research, 9, 75–91.

Jordan, M.I., & Jacobs, R.A. (1990). Learning to control an unstable system with forward modeling. In D.S. Touretzky (Ed.),Advances in neural information procesing systems 2, 324–331. San Mateo, CA: Morgan Kaufmann.

Jorgensen, C.C. (1987). Neural network representation of sensor graphs in autonomous robot navigation.Proceedings of the IEEE First International Conference on Neural Networks, Vol IV (pp. 507–515).

Khatib, O. (1986). Real time obstacle avoidance for manipulators and mobile robots.The International Journal of Robotics Research, 5, 90–98.

Langley, P. (1985). Learning to search: From weak methods to domain-specific heuristics.Cognitive Science, 9, 217–260.

Lin, L.-J. (1990). Self-improving reactive agents: Case studies of reinforcement learning frameworks.Proceedings of the First International Conference on the Simulation of Adaptive Behavior: From Animals to Animats (pp. 297–305).

Lozano-Pérez, T. (1983). Spatial planning: A configuration space approach.IEEE Transactions on Computers, 32, 108–120.

Lozano-Pérez, T., & Wesley, M. (1979). An algorithm for planning collison-free paths among polyhedral obstacles.Communications of the ACM, 22, 560–570.

Mahadevan, S., & Connell, J. (1990).Automatic programming of behavior-based robots using reinforcement learning (Technical Report RC 16359). Yorktown Heights, NY: IBM, T.J. Watson Research Center.

Mel, B.W. (1989). MURPHY:A neurally-inspired connectionist approach to learning and performance in vision-based robot motion planning. Ph.D. Thesis, Graduate College, University of Illinois, Urbana-Champaign.

Millán, J. del R., & Torras, C. (1990). Reinforcement learning: Discovering stable solutions in the robot path finding domain.Proceedings of the Ninth European Conference on Artificial Intelligence (pp. 219–221).

Millán, J. del R., & Torras, C. (1991a). Connectionist approaches to robot path finding. In O.M. Omidvar (Ed.),Progress in neural networks series, Vol 3. Norwood, NJ: Ablex.

Millán, J. del R., & Torras, C. (1991b). Learning to avoid obstacles through reinforcement. In L. Birnbaum & G. Collins (Eds.)Machine learning: Proceedings of the Eighth International Workshop, 298–302. San Mateo, CA: Morgan Kaufmann.

Mozer, M.C., & Bachrach, J. (1989).Discovering the structure of a reactive environment by exploration (Technical Report CU-CS-451-89). Boulder, CO: University of Colorado, Dept. of Computer Science.

Munro, P. (1987). A dual back-propagation scheme for scalar reward learning.Proceedings of the Ninth Annual Conference of the Cognitive Science Society (pp. 165–176).

Rivest, R.L., & Schapire, R.E. (1987). A new approach to unsupervised learning in deterministic environments.Proceedings of the Fourth International Workshop on Machine Learning (pp. 364–375).

Robinson, A.J. (1989).Dynamic error propagation networks. Ph.D. Thesis, Engineering Department, Cambridge University, Cambridge, England.

Saerens, M., & Soquet, A. (1989). A neural controller.Proceedings of the First IEE International Conference on Artificial Neural Networks (pp. 211–215).

Schoppers, M.J. (1987). Universal plans for reactive robots in unpredictable environments.Proceedings of the Tenth International Joint Conference on Artificial Intelligence (pp. 1039–1046).

Singh, S.P. (1991). Transfer of learning across compositions of sequential tasks. In L. Birnbaum & G. Collins (Eds.)Machine learning: Proceedings of the Eighth International Workshop, 348–352. San Mateo, CA: Morgan Kaufmann.

Steels, L. (1988). Steps towards common sense.Proceedings of the Eighth European Conference on Artificial Intelligence (pp. 49–54).

Sutton, R.S. (1984).Temporal credit assignment in reinforcement learning. Ph.D. Thesis, Dept. of Computer and Information Science, University of Massachusetts, Amherst.

Sutton, R.S. (1988). Learning to predict by the methods of temporal differences.Machine Learning, 3, 9–44.

Sutton, R.S. (1990). Integrated architectures for learning, planning, and reacting based on approximating dynamic programming.Proceedings of the Seventh International Conference on Machine Learning (pp. 216–224).

Torras, C. (1990). Motion planning and control: Symbolic and neural levels of computation.Proceedings of the Third COGNITIVA Conference (pp. 207–218).

Watkins, C.J.C.H. (1989).Learning with delayed rewards. Ph.D. Thesis, Psychology Department, Cambridge University, Cambridge, England.

Werbos, P.J. (1987). Building and understanding adaptive systems: A statistical/numerical approach to factory automation and brain research.IEEE Transactions on Systems, Man, and Cybernetics, 17, 7–20.

Whitesides, S.H. (1985). Computational geometry and motion planning. In G. Toussaint (Ed.),Computational geometry. Amsterdam, New York, Oxford: North-Holland.

Williams, R.J. (1986).Reinforcement learning in connectionist networks: A mathematical analysis (Technical Report ICS-8605). San Diego, CA: University of California, Institute for Cognitive Science.

Williams, R.J. (1987).Reinforcement-learning connectionist systems (Technical Report NU-CCS-87-3). Northeastern University, Boston, MA: College of Computer Science.

Yap, C.-K. (1987). Algorithmic motion planning. In J.T. Schwartz & C.-K. Yap (Eds.),Advances in robotics, Vol. I. Algorithmic and geometric aspects of robotics, 95–143. Hillsdale, NJ: Lawrence Erlbaum.

Author information

Authors and Affiliations

Rights and permissions

About this article

Cite this article

Millán, J.D.R., Torras, C. A reinforcement connectionist approach to robot path finding in non-maze-like environments. Mach Learn 8, 363–395 (1992). https://doi.org/10.1007/BF00992702

Issue Date:

DOI: https://doi.org/10.1007/BF00992702